LEGO is doing okay

This nice visualisation of LEGO group annual revenue shows that after a lull in the late 2010’s, there has been incredible growth since 2020 – presumably somewhat assisted by pandemic lockdowns?

As someone who enjoys LEGO but is running out of storage space, I’ve been trying out BrickBorrow for the last year, where for a subscription (and some postage each time) you can borrow LEGO sets.

A well-designed feature restricts big sets to those who have been subscribed for 3 months – this shows reliability, and also helps with availability of those sets. Now that BrickBorrow have shifted to a Royal Mail sticker postage method, and added a filter on the sets to only show those that are available, I recommend it!

I Am Not Left-Handed

This is the name of a trope where a character reveals they were previously fighting with a self-imposed handicap, which they then shed to fight at their true power. This is a classic technique for shallow power-fantasy stories, but despite that I find it incredibly compelling every time.

My favourite concentrated example of it is this (now very old!) Anime Music Video which edits together a particular fight from Naruto, which I also appreciate for how it establishes a rooting interest in one of the combatants without any dialogue:

Temp Tracks in film

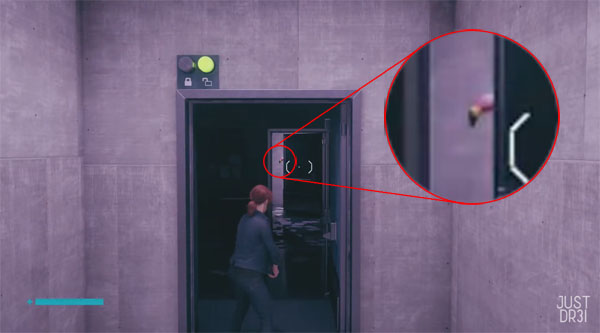

In this episode of Every Frame a Painting, Tony Zhou and Taylor Ramos break down the way in which ‘safe’ creative choices around music in the Marvel films has led to a weaker overall effect:

Towards the end they highlight the problem of the ‘Temp Track’: a piece of film is edited to a suitable existing piece of music, but the film-makers work with that version for so long they become wedded to the way it sounds, so when they eventually commission original music, they request something almost identical. In a spin-off video, EFAP show a lot of examples.

The opposite of this is Tom Tykwer’s method (director of Run Lola Run (1998) ), in which the soundtrack is composed first. You can hear a bit about it in this segment of the making of The Matrix Resurrections, and it does seem very effective.

While we’re on the topic, I personally greatly enjoyed The Matrix Resurrections (2021) for it’s metatextual resonance rather than literal content, apparently in marked contrast to most people. But that is a story for another time.

Dancing at the end of films

A Bollywood staple, after the film reaches its narrative conclusion, even if it’s not a musical and there has been no dancing before, the film ends with the whole cast performing an elaborate dance number (TV Trope: Dance Party Ending). This can have a fascinating effect on how you feel about the film as a whole, sometimes redeeming antagonists, bringing back characters who died, or just providing an emotional catharsis after an otherwise tense time.

Unfortunately I suspect that citing my favourite Western films that do this is also a strange kind of spoiler. So instead I will recommend to you several films that I have seen recently, at least one of which uses this to good effect, but all of which I think are worth watching for one reason or another. Some will even be improved by you thinking there might be a dance at the end, even if there isn’t!

- Knight and Day (2010), Disney+, a strange clash of genres that works great… some of the time

- Labyrinth (1986)

- Medusa Deluxe (2022), a ‘single-take’ hairdressing competition murder mystery

- Saltburn (2023), directed by Emerald Fennell, whose previous film Promising Young Woman (2022) I also recommend… for adults that like ambiguous protagonists

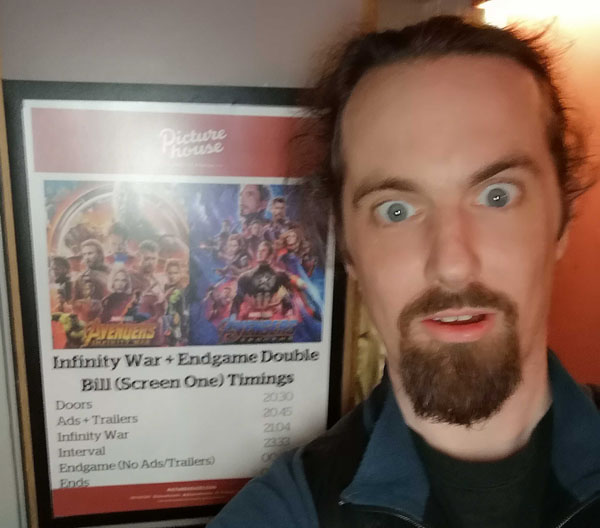

- The Marvels (2023), Disney+, MCU take some creative risks! Some of which work!

- Teenage Mutant Ninja Turtles: Mutant Mayhem (2023)

- White Noise (2022), Netflix, weirder and less ‘fun’ than the trailer implies (but I still recommend it)

- The Zone of Interest (2023)

Dancing in a fursuit

Probably best to jump in with no context and watch this one-minute clip, which annoyingly I can’t embed so you will have to actually click on it:

https://www.youtube.com/shorts/L03td6_rOvk

Wow! What the heck was that? This ad-laden article lays out the whole story. Gintan is some kind of K-pop star in his own right, but is now known for performing at ‘Random Dance’ events in this very distinctive fursuit. In these events, clips from K-Pop songs with popular choreography are played, and anyone who knows the routine jumps into the centre to perform it. There’s a delightfully over-academic essay about these events here.

What’s really impressive is that not only has Gintan memorised so many of these routines, and not only can he perform them with incredible precision and panache on demand, he does all of this while wearing a heavy fursuit – which is like a really fun version of the ‘I Am Not Left-Handed’ trope described above!

On top of that, the slightly serious expression on the suit is a great contrast with the frivolousness of the whole thing, and it always brings a smile to my face.

Find lots more Gintan footage like this with this Youtube search.

The Meta-Problem of Consciousness

Let’s get a bit more serious for a moment.

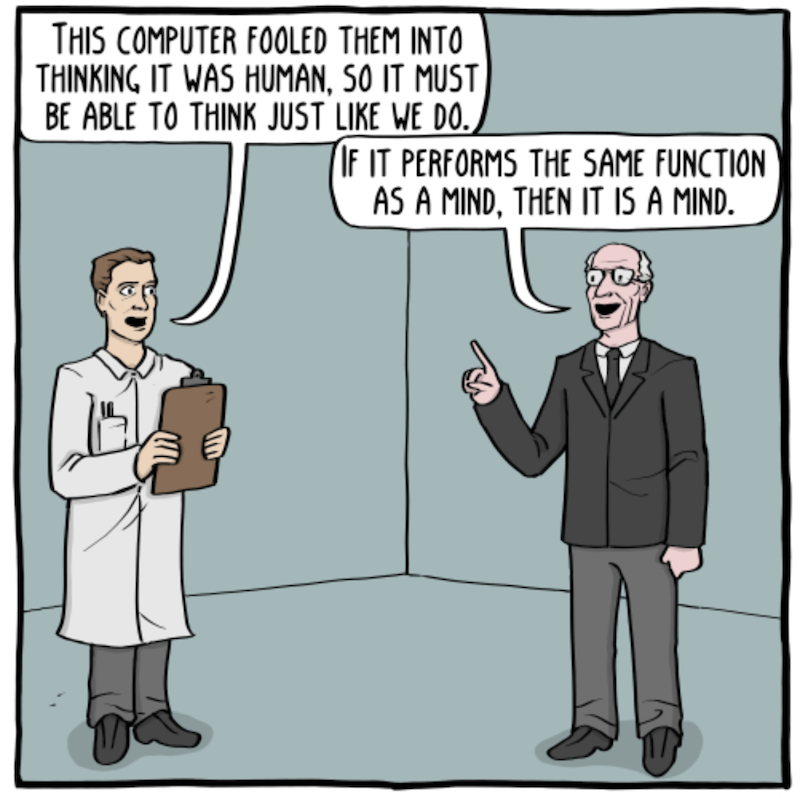

The Hard Problem of Consciousness is a philosophical one: to use Wikipedia’s summary, it asks why and how do we experience qualia, phenomenal consciousness, and subjective experiences? Related questions: where does consciousness reside? Is it a quantum effect? Is it separate from our physical forms in some way?

I never found this problem convincing at all. Why would we expect consciousness to feel any different to the way it actually does? Literally our only reference case is how we experience it, on what grounds can we say this is surprising?

I first read about this some decades ago, so I was delighted to find that in 2018 philosopher David Chalmers proposed a more precise and slightly sassy formulation of my line of thinking: the “Meta-problem of Consciousness”. This is “the problem of explaining why we think that there is a [hard] problem of consciousness.”

Yes! That does indeed seem to be the more pressing problem.

The Temp Track that went well

I know of one example of a film that used a temp track to edit a key scene, and (in my opinion) this actually produced an excellent final result. Even as someone quite averse to spoilers, in this particular case I don’t think reading about it – or even watching the scene on its own – actually spoils the film!

However, if you worry even more about spoilers than me, you might not want to know about it. So, just know that it is from one of the films listed above, I’ll be talking about it after the extended Thing about creativity-over-time below, and it is the last Thing of this episode so you can easily skip it if you want. Be ready!

Creativity over time: productivity and scope

I’m very interested in the creative process. The brain is a machine that can come up with ideas or whole creative works, but the methods by which you can best achieve that are not obvious.

When it comes to long-form works this is particularly tricky. Here’s a segmentation I came up with for thinking about this:

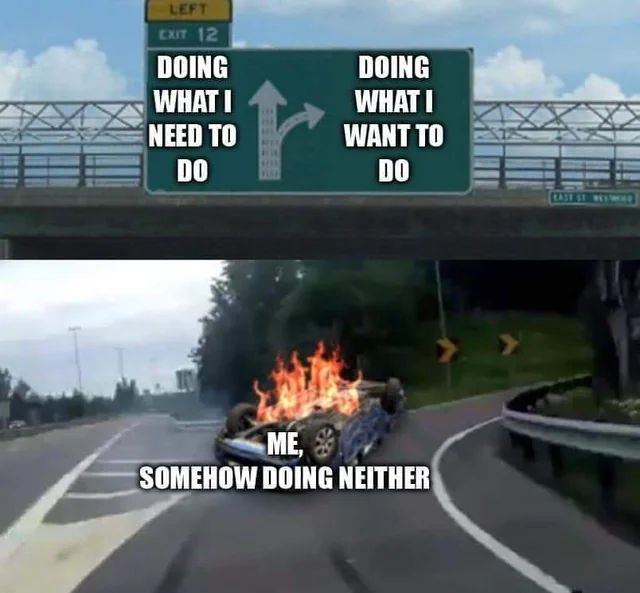

Planning style: Plan in advance vs. Freestyle

Routine style: Fixed schedule vs. When it’s ready

The pro/con on these is pretty clear, at least for narrative works.

Plan in advance

Pro: A solid overall story that wraps up satisfyingly (even if you have to alter it a bit as you go)

Con: Characters may not act consistently as you’re forcing them to hit story beats

Freestyle

Pro: Characters and situations evolve naturally

Con: Plot may spiral out of control and not go anywhere

Fixed schedule

Pro: Progress is made consistently, can retain and build an audience

Con: Quality may suffer

When it’s ready

Pro: Maximise quality

Con: Easy to put off and polish indefinitely

If you know me, you know what’s coming next… a consideration of the four combinations!

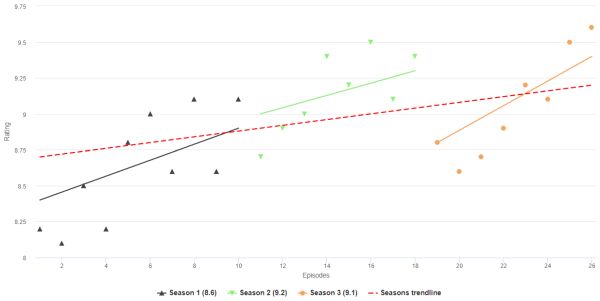

The four approaches to ongoing narrative

As with any classification of creative works, some of this is subjective or debatable for many reasons. Regardless, here’s some examples:

Plan in advance, fixed schedule

Star Wars original trilogy (sort-of), Babylon 5, Breaking Bad.

Plan in advance, when it’s ready

The Gentleman Bastards book series

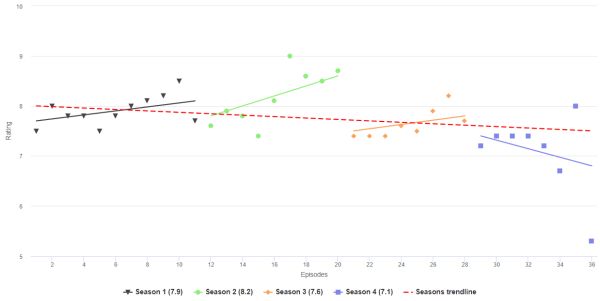

Freestyle, fixed schedule

Questionable Content, Star Wars sequel trilogy, Lost

Freestyle, when it’s ready

Game of Thrones, Dresden Codak, Confinement animation

Now, just from writing down the first examples I could think of, some very natural patterns emerge.

A plot planned in advance and delivered to a fixed schedule has produced some of the most beloved completed works there are.

In opposition to that, Freestyle and When it’s Ready has produced works that I think have an even more intense fandom (as it maximises quality), but frequently slow down and stall for one reason or another.

Freestyle with a fixed schedule generally seems like a bad idea, but over long time periods works in a sort of ‘soap opera’ format.

Plan in advance, release when ready seems to be very rare, and seems intuitively the most likely to become a victim of procrastination / anxiety / writer’s block stalling progress.

Some case studies in slowed progress

Game of Thrones (or properly titled, the ‘A Song of Ice and Fire’ series) is perhaps the apex example of ‘Freestyle, when it’s ready’ slowing to a crawl (or possible halt). Here’s a chart showing the release date and length of each book, running up to the present day when ‘The Winds of Winter’ has not yet come out.

To be clear, I don’t consider this a failing. I think the books are as well-loved as they are precisely because this method of production maximises quality and character. However, expectations for a timely finish should be held quite low.

A recent example was shared with me by Laurence: Confinement, a series of animations based on the SCP Foundation (referenced in Things November 2022). These had an even more dramatic stall: episode 7 was extremely popular and drove many to support the creator’s Patreon. However, about 3.5 years later the creator admitted they didn’t have it in them to make episode 8 any more and formally closed all their social channels. (There’s a lot more drama to that, which you can read about here).

Here’s how those releases looked, running the x-axis to the point when the project was officially cancelled:

In what is (I think) an example of the rare “Planned in advance, release when ready”, the Bee and Puppycat animation managed to reach a pretty satisfying conclusion (so far) about 9 years after it began – with an astonishing 83% of the run-time dropping all at once at the very end:

The slowness of early releases was due to a very small team working on the animation. Then a series of complicated licensing delays and disasters conspired to delay later releases. But in the end, a soft reboot / series 2 eventually dropped all at once on Netflix in September 2022.

I’ll tell you why Bee and Puppycat is so good another time, but for now just know that when I audited all 50+ in-jokes I share with Clare, this series accounted for more of them than anything else.

While less narrative in nature, the web comic Hyperbole and a Half had a very prolonged hiatus. In the dangerous “Freestyle, release when it’s ready” category, but without the burden of an overarching narrative, artist Allie Brosh had published a series of excellent and very personal hybrid comic/narratives, from 2009-2010. Output slowed in 2011 due to mental health issues, a medical condition, and a focus on turning the content into a book. Things seemed to end with the book coming out in October 2013 and at the same time the truly excellent “Menace” strip being published (shortly after the Bee and Puppycat pilot aired).

Then, nothing, for a very long time. This was also quite concerning given the prior comic was a very personal one about coming to terms (perhaps?) with depression. On the other hand, author Allie Brosh had said “In the world of writing internet content, there’s all this talk of “maintaining an audience” and “staying on the radar,” but I’d rather just work really hard for a really long time on one thing that I feel really good about publishing.”

So it was that a sequel book “Solutions and Other Problems”, announced in 2015, eventually came out in September 2022, 9 years after the last published work (and also around the time the Netflix Bee and Puppycat series finally dropped, as it happens). The content of that book follows the previous form, and also details some of the things that happened to Brosh in the intervening years, and the reason for the gap in public output becomes devastatingly clear. I highly recommend both books.

Finally, in that rare “Plan in advance, release when ready” category, Scott Lynch published The Lies of Locke Lamora in 2006. Nick recommended it to me, and I enjoyed it quite a lot, but it seemed like the author liked world-building a little too much. As the first in a planned series of 7 books called the ‘Gentleman Bastard’ series, I decided to wait until the series finished before reading on.

A second book appeared in 2007, a third in 2013… and at the time of writing, nothing else.

Scott Lynch wrote very candidly in 2022 about what has been going on. He has in fact been writing very productively, but a kind of anxiety is holding him back from publishing any of it, including updates about how it is going. (As a Things reader you probably enjoy ‘meta’ things, so you should read that post).

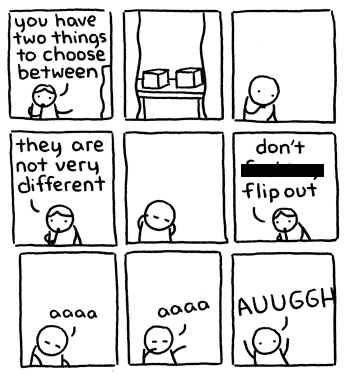

Here’s the point where we get meta about it right here: I recognise that problem because that is exactly what happened to me since 2020 (when a pandemic happened, funnily enough). I have 4 rather long and pretty much complete blog posts about various topics, none of which I felt confident enough about to post. This hasn’t happened to me before!

As a Things reader you might also recognise that even aside from that, the rate of Things posts gets slower and slower (with the surprise exception of this one… at the time I’m writing this sentence, anyway). That is something I find a bit harder to explain.

Having written all the above, it does make me wonder: should I commit to a schedule for Things? Wouldn’t once a quarter be a completely reasonable one to try?

Let’s say this is the 2024 Quarter 1 things and see how things go from there!

The Temp Track that Went Well: not a spoiler, but might be if you’re very worried in which case don’t read this

Are you ready?

So this is about a scene that happens at the very end of one of the films in my list above.

Specifically a scene where everyone starts dancing

That’s enough line spacing, so here we go. Perhaps you are familiar with the LCD Soundsystem’s 2005 song “Daft Punk is Playing in my House”. It seems to be their 3rd most popular song on Spotify, and 2nd most popular song on Youtube. It is rather repetitive but has a very compelling hook:

(The music video references the Things-favourite Michel Gondry-directed music video to Daft Punk’s “Around the World”, another repetitive but compelling song).

So at the end of White Noise (2022), there is a scene where the characters visit the excellently set-dressed 80’s supermarket and everyone there starts dancing as the credits roll. Incidentally, this tipped the movie over from something I thought was interesting-but-a-bit-weird into excellent.

LCD Soundsystem’s ‘Daft Punk is Playing in my House’ was used as the temp track for this scene – and indeed was the track the dancing was choreographed and performed to, which ordinarily I would say is going a bit too far for a temp track. However, here’s the twist: they commissioned LCD Soundsystem themselves to write a new track to play over the scene instead.

I had previously written about how fans of a band often cling to the past and are less keen (at least initially) on new musical directions, with the example of the audience response to a DJ Shadow gig (“Artistic Stasis or Death!”). So it seems like an outrageously bold thing to ask a band to make a new song so specifically similar to a well-loved old one.

And the beauty of it is, LCD Soundsystem did it. They made a new track – “new body rhumba” – that for me is even better than DPIPIMH from 17 years earlier, and is completely perfect for this scene in the movie. You can listen to it here or just watch that scene itself (accepting that this is perhaps more of a spoiler, although not really given how loose the rules of continuity are when it comes to Dance Party Endings).

Side-note, this may just seem weird and boring without the context of the film leading up to it, or even with it since everything is subjective. But anyway, enjoy!

- Transmission ends